Hello from Niantic Research!

When Niantic launched Pokémon GO in 2016, one of our goals was to empower people to see the world with new eyes. That vision continues to drive our research team as we push the boundaries around break-through technology theories and explore new frontiers of augmented reality. Our research team balances bringing technology to the platform that can be used today with also thinking 30 years out. In this blog post, we're going to share some of that work and discuss how we’re building experiences from the ground up, literally. Read on to find out what we mean…

Guess what I see

We take for granted our incredible ability to perceive things. When we view a scene, we understand what we see, as well as understand what we CAN’T see, and we fill in the gaps with information. Take for example the image below.

While you can’t see the ground behind the tree, you know it’s there. You recognize the ground. You innately understand that a tree is circular. While we take this for granted, you’re doing a very complicated processing task, translating a 2D image to a 3D space. Here’s what’s happening in your head...

While this ability is intuitive to humans, it’s completely foreign to a computer. It’s not good at understanding what “ground” or “tree” is, let alone guessing 3D space from a single 2D image.

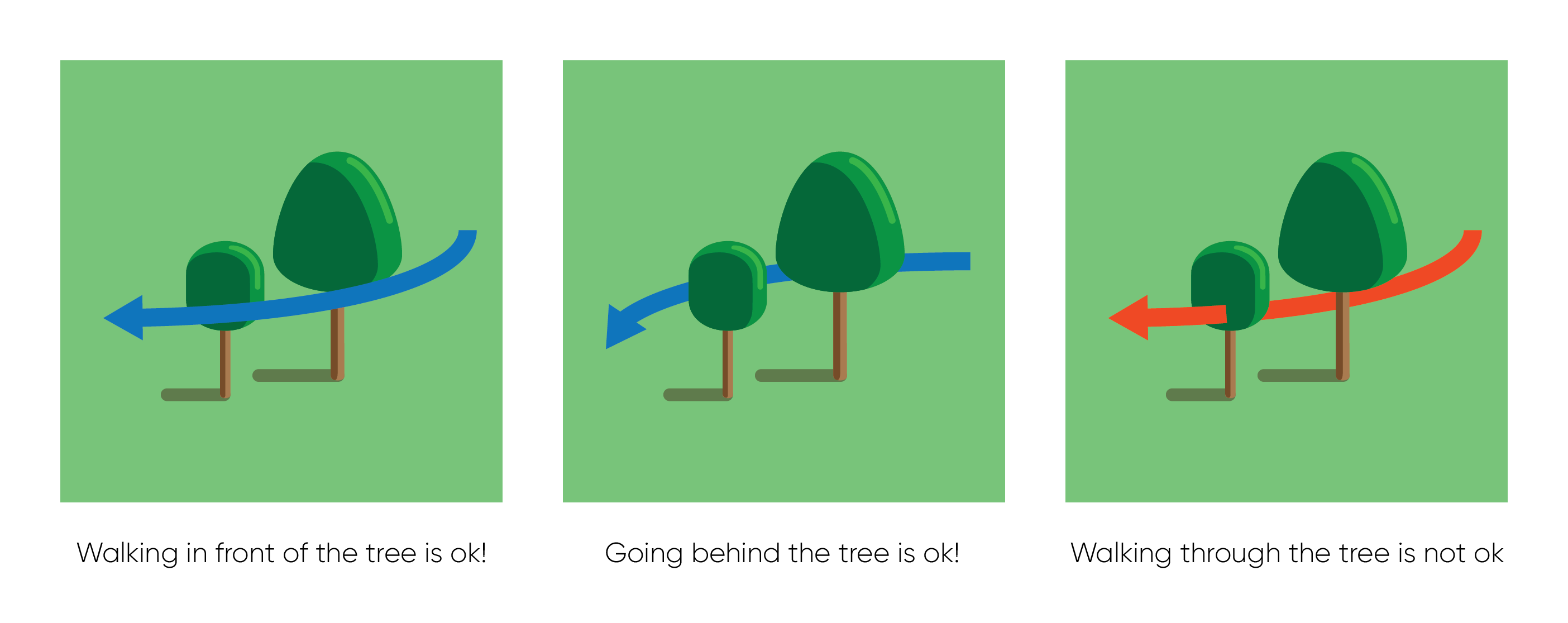

Teaching a computer to predict a 3D space is a very hard problem to solve but we believe it’s important because it makes AR experiences more convincing. If we wanted to place a digital character in a scene, it must interact with the physical world realistically. If a character were to walk into, or even worse, through physical objects, the believability of the experience would be instantly broken.

To address this problem, we need to help the computer do what you easily do in your head—create a 3D map out of incomplete information from a 2D image. We set out to tackle this by doing two things: find the ground and estimate what part of the ground was occupied.

Turning a 2D image into traversable 3D Space

… technically, we’re “introducing a model to predict the geometry of both visible and occluded traversable surfaces, given a single RGB image as input.”

Basically, we’re able to turn 2D images into 3D space AND we’re able to estimate what space is actually traversable. Here’s what our technology looks like when it’s running.

Red represents “usable” ground You’ll note that certain items—like the bin below—has unusable or occupied ground.

Putting it all together.

Our goal is to make more convincing AR experiences. By utilizing the aforementioned techniques, we can more believably place digital characters in the real world. For example, let’s see what we can do when we feed our 3D map back into a 2D image.

Below is an example of a character pathway through a scene when no technology is applied. The character has no understanding of the environment and unrealistically traverses over everything.

The next version is an improvement because the character now recognizes the ground. But the limitation is that it only recognizes the VISIBLE ground.

This is our version. Because the character recognizes both visible and hidden ground, it can move behind things. Our character can now explore a lot more of its world.

What’s unique about this work is it’s really efficient. In the video below, you’ll see a familiar character traverse the real world believably.

This video was done in 2018 but it was computed on desktop computers. Our current methodology is much more efficient and can run easily on modern smartphones.

Bridging reality and augmented reality

In summary, this work is but one of many to advance the state of augmented reality and ultimately deliver better AR experiences to more people; on mobile phones, glasses, and beyond!

We can't wait to roll these advances in our games and experiences to make AR more believable, on even more devices. While we’re focused on certain kinds of experiences today, we know this work can be applied to many other fields such as robotics. As such, Niantic Research will continue to contribute its work openly to the scientific community so we can continue to advance the state of computer vision and AR, together. Follow our work at github.com/nianticlabs

As this blog post was intended to share what we’re doing with a wide audience, we didn’t go into a lot of more complicated detail. If you’d like to learn more, you can read our full research paper now and we’ll present a detailed breakdown in June during CVPR 2020—one of the world’s most influential leading computer vision conferences.

-Michael Firman, PhD